3985

Feasibility of Multi-contrast MR imaging via deep learningShanshan Wang1, Tao Zhao1,2, Ningbo Huang1,3, Sha Tan1,4, Yuanyuan Liu1, Leslie Ying5, and Dong Liang1

1Paul C. Lauterbur Research Center for Biomedical Imaging, SIAT, Chinese Academy of Sciences, Shenzhen, People's Republic of China, 2College of Mining and Safety Engineering, Shandong University of Science and Technology, Qingdao, People's Republic of China, 3School of Computer Science and Technology, Changchun University of Science and Technology, Changchun, People's Republic of China, 4School of Information Engineering, Guangdong University of Technology, Guangzhou, People's Republic of China, 5Department of Biomedical Engineering and Department of Electrical Engineering, The State University of New York, NY, United States

Synopsis

This paper develops a deep learning based multi-contrast MR imaging method. Unlike existing methods which mainly draw prior information from the target structure or a few reference images, we design a multi-contrast convolutional neural network to draw automatic feature descriptors for describing the multi-contrast correlations and identify the nonlinear mapping with the utilization of enormous existing multi-contrast MR images as training samples. Once the network is learned, it performs as a predicator for the online multi-contrast MR imaging. Experimental results on multi-contrast in vivo dataset show that the proposed method could restore lost information from the undersampled MR images while keeping their contrasts.

Introduction

Multi-contrast MR imaging is a very prevailing and important technique for thorough clinical diagnosis and research analysis due to its capability in providing various contrast information for a same anatomical section1,2. Nevertheless, long imaging time is a major hurdle for its wide applications. To accelerate multi-contrast MR acquisitions, a popular direction is exploring the prior knowledge of the to-be-reconstructed multi-contrast MR images and then use them for the joint reconstruction from undersampled data3,4,5. In this paper, instead of only considering the prior information from the target images, we employ deep learning to reconstruct multi-contrast MR images by drawing valuable knowledge from the big data we obtained previously. A convolutional neural network is designed to not only draw automatic feature descriptors for describing the multi-contrast correlations but also identify the nonlinear relationship between the images reconstructed from the undersampled data and the fully sampled data6,7.Theory and method

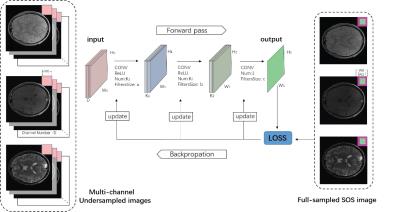

Two essences for the deep learning based multi-contrast MR imaging are: multi-contrast datasets and a deep learning network. As for the datasets, we employ the typical SIEMENS turbo Flash protocols to generate the MR data. Namely, we adjusted the time of repletion (TR), time of echo (TE) and turbo factors to provide the typical contrast configurations for PD-, T1- and T2-weighted images. All the three contrast configurations were used to image a same subject with the same FOV and matrix size. Then the fully sampled multi-contrast data are retrospectively subsampled to generate the input data for the network, while the labels are the images generated from the fully sampled data. For the deep learning network, we choose the convolutional neural network based on the fact that it has strong capability in automatic feature extraction, structure preservation and nonlinear relationship description. We designed a three layer convolutional neural network as shown in Fig. 1 With the big data and GPU support, once the network is trained, it would be utilized to predict the online multi-contrast MR images.Experiment

We have recruited 33 volunteers and used the 3T scanner (SIEMENS MAGNETOM Trio) and 12 channel head coil of our institutes to generate the 2D fully sampled multi-slices PD-, T1- and T2- weighted brain datasets. Informed consent was obtained from the imaging subjects in compliance with the Institutional Review Board policy. Undersampled measurements were retrospectively obtained using 1D partial Fourier mask. We used three layers of convolution for the network with the following configurations (128 nodes for the 1st layer with a kernel size 9*9, 64 nodes for the 2nd layer with a kernel size of 5*5, 1 node with size of 5*5). The offline training took almost four days on a workstation equipped with 128G memory and a processor of 16 cores (Intel Xeon (R) CPU E5-2680 V3 @2.5GHz). Then the trained network was evaluated on in-vivo sagittal brain dataset which were acquired on a 3T scanner (SIEMENS MAGNETOM Trio) with a 12-channel head coil by the three protocols (PD: TR=5000ms, TE=11ms Turbo factor=8; T1:,TR=1000ms, TE=11ms, Turbo Factor=4; T2: TR=5000ms, TE=76ms, Turbo factor=16; with a FOV size of 21cm, slice thickness 2.0mm, matrix size 256*256,).Result and discussion

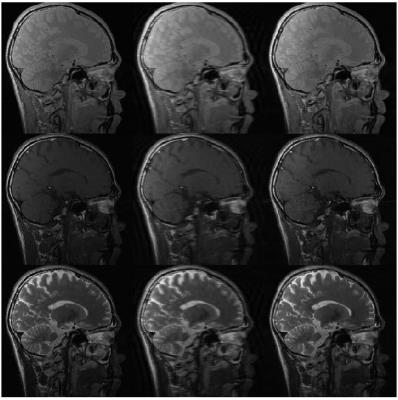

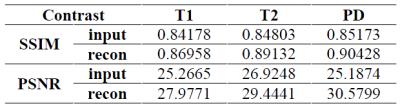

Fig. 2 presents the three contrast groundtruth images, the 1D partial Fourier undersampled MR image and the images reconstructed by our proposed network with an acceleration factor of 3. It can be seen that there are lots of fine details and structures restored by the proposed network compared to the undersampled multi-contrast MR images. The corresponding quantitative measurements also indicate the proposed method have restored lost information.Conclusion

This work designs and trains a deep convolutional neural network for multi-contrast MR imaging. The network absorbs prior information from a large of existing high-quality multi-contrast images, namely the knowledge on the correlations between the multi-contrast configurations and the nonlinear relationship between the undersampled images and fully sampled images. The experimental results have shown that the proposed method is able to restore fine structures and details while preserving the contrast.Acknowledgements

Grant support: China NSFC 61471350, 61601450, the Natural Science Foundation of Guangdong 2015A020214019, 2015A030310314, the Basic Research Program of Shenzhen JCYJ20140610152828678, JCYJ20160531183834938, JCYJ20140610151856736 and the youth innovation project of SIAT under 201403 and US NIH R21EB020861 for Ying.References

1. Granziera C, Daducci A, Donati A, Bonnier G, et al. A multi-contrast MRI study of microstructural brain damage in patients with mild cognitive impairment. Neuro Imaging: Clinical, 2015; 8:631-639. 2. Binjian S, Don P. G, Robert L and John N. O. Characterization of coronary atherosclerotic plaque using multicontrast MRI acquired under simulated in vivo conditions. JMRI, 2006; 24(4): 833-841. 3. Berkin B, Vivek K. G, Elfar A. Multi-contrast reconstruction with Bayesian compressed sensing. MRM, 2011; 66(6):1601-1615. 4. Enhao G, Feng H, Kui Y, Wenchuan W, Shi W and Chun Y. PROMISE: Parallel-imaging and compressed-sensing reconstruction of multicontrast imaging using SharablE information. MRM, 2015; 73(2):523-535. 5. Angshul M, Rabab K. W. Joint reconstruction of multi-echo MR images using correlated sparsity. MRI, 2011; 29(7): 899-906. 6. Shan W, Zhenghang S, Leslie Y, Xi P, Shun Z, et al. Accelerating magnetic resonance imaging via deep learning. IEEE ISBI, 2016; 514-517. 7. Shan W, Zhenghang S, Xi P and Dong L. Exploiting deep convolution neural network for fast resonance imaging. ISMRM, 2016; 1778.Figures

Figure 1: A general description

of the deep learning based multi-contrast MR imaging framework

Figure 2: From left to

right and top to bottom: PD/T1/T2: groundtruth image, the undersampled MR image,

the image reconstructed by our method from 33% data.

Table 1:The corresponding

quantitative measurement in SSIM and PSNR for the in-vivo test